AI just switched sides. Not metaphorically, but literally.

I've been saying for a while that the "AI for security" conversation was too focused on the defense side. This week handed me five stories that prove the point in ways that are hard to ignore. We're not in the "AI might eventually be used offensively" phase anymore. We're in the "an AI bot is autonomously probing your CI/CD pipeline right now" phase.

Welcome to this issue of AppSec Signals. Let's get into it.

Claude Found 22 Firefox Vulnerabilities, With Automated Exploit Generation

Claude generated working exploits of 22 Firefox bugs automatically. This isn't a "AI helps write better fuzzing harnesses" story. This is original vulnerability research at a scale that would have taken a team of researchers weeks. The economics of security research just shifted permanently. If one researcher with a capable model can produce this volume of high-quality findings, the question security teams need to be asking isn't "how do we use AI for vuln management?"; it's "how do we patch faster than this pipeline produces findings?”

Source: Anthropic Blog

An AI Bot Was Autonomously Hacking GitHub Actions

An autonomous AI bot, in the wild, targeting GitHub Actions pipelines. tl;dr sec covered this in issue #318, and it's worth sitting with for a minute. CI/CD pipelines are already notoriously under-secured — most organizations treat them as a build artifact concern, not a security concern. But if you can compromise a GitHub Action, you can inject into the software supply chain, exfiltrate secrets, or pivot into production. AI just made that attack automated and scalable.

Trail of Bits Found Four Prompt Injection Techniques That Leaked Gmail From an AI Browser

This one is a masterclass in how to think about AI application security. Trail of Bits audited Comet — Perplexity's AI browser assistant — and found that external web content wasn't being treated as untrusted input. They demonstrated four injection techniques and five working exploits: fake CAPTCHAs, spoofed system instructions, fabricated user requests, and summarization hijacking — all capable of quietly exfiltrating Gmail data. The core finding is architectural: AI agents that browse the web on your behalf inherit every malicious instruction on every page they visit. Your attack surface isn't just your code anymore. It's every URL your AI agent touches.

Build the Glue, Not the Gun: What Security Teams Should Actually Build With AI

Ross Haleliuk at Venture in Security wrote the clearest framing I've seen on this: security teams should use AI to automate internal "glue work" — the documentation, communication, triage coordination, and workflow plumbing that consumes most of a security engineer's actual day. Not to rebuild vendor products, not to build detection engines from scratch. The reasoning is solid: you don't have the scale advantage, the liability exposure is real, and your vendors have threat intelligence you'll never replicate. Save your engineering cycles for the workflows only you understand. Honestly, this matches what I see when I talk to AppSec teams — the biggest gains aren't coming from fancy AI security tools. They're coming from someone who automated the ticket-to-Jira-to-Slack-to-oncall pipeline.

Source: Ross Haleliuk’s Substack

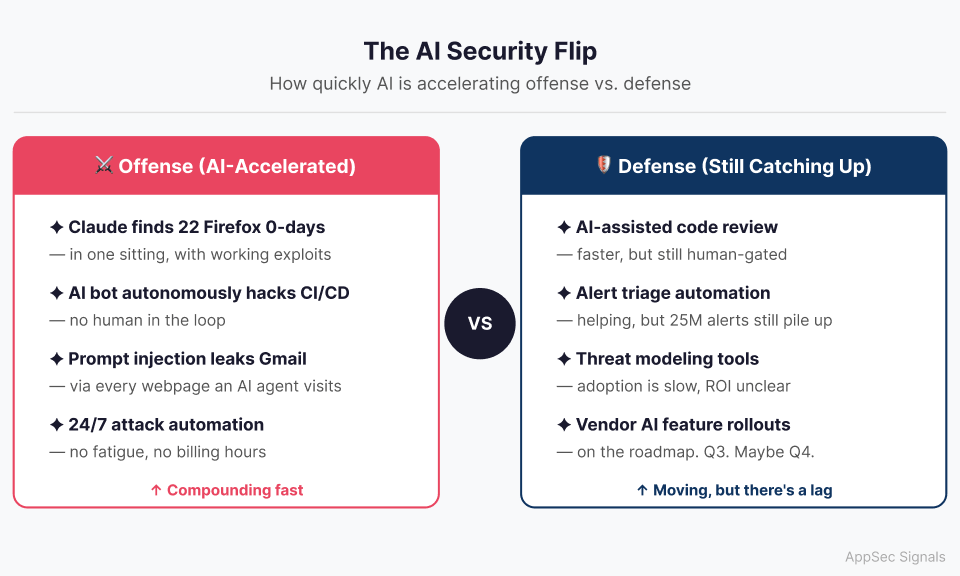

Opinion: What I Think About the AI Offense/Defense Imbalance

I firmly believe AI is going to help offense more than defense, at least for the next few years. Security vendors have to be right every time. Attackers only need to be right once. AI gives offense a massive scale subsidy: one motivated attacker with a capable model can now produce vulnerability research at a pace that took a team of three to five people. An autonomous bot can probe attack surfaces 24/7 without fatigue, without billing hours, without a visa requirement. The asymmetry that already existed just got wider.

What I keep coming back to is this: when Claude can find 22 Firefox 0-days in one sitting, your patch velocity becomes a more important metric than your detection coverage. You can't detect your way out of a world where novel exploits are being generated faster than your vuln management cycle runs. The orgs that are going to handle this well are the ones treating secure-by-design as a first-class constraint, not as a compliance checkbox, not as something the security team adds at the end.

If this made you think, even if you disagree, reply and tell me what you'd push back on. I genuinely read every response.

— Amir

Thanks for reading The AppSec Signal, DevArmor’s newsletter for security professionals.

Have feedback or ideas for what we should cover next?

Feel free to reach out - [email protected]