What RSAC 2026 actually told us about the future of AppSec

The old AppSec model broke. RSAC just made it official.

I've been processing everything from RSAC and BSidesSF, and I keep landing on the same conclusion: the AppSec model we've been running for a decade finally hit the wall. AI is rewriting both sides of the equation — offense is scaling, defense tools are scrambling, the supply chain took multiple direct hits, and the vendor floor was 37% "AI security" booths with nobody agreeing on what that means.

Let’s get into it!

This Week's Signals

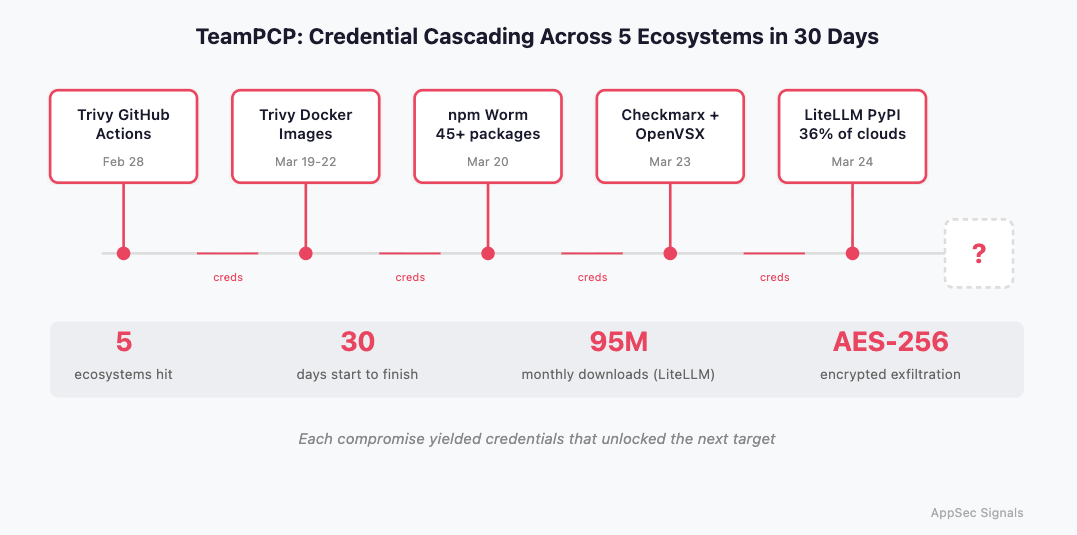

TeamPCP Just Ran a Month-Long Masterclass in Cascading Supply Chain Attacks

This is the most sophisticated supply chain campaign I've seen in a while. TeamPCP compromised Trivy GitHub Actions in late February, then used those credentials to pivot to Trivy Docker images, Checkmarx KICS, OpenVSX extensions, a self-propagating npm worm (45+ packages), and finally LiteLLM on PyPI, which sits in 36% of cloud environments.

The LiteLLM compromise is especially nasty. Version 1.82.8 used Python's .pth mechanism to execute on any Python invocation, even if LiteLLM was never imported. It harvested SSH keys, cloud creds, K8s secrets, deployed privileged pods for lateral movement, and exfiltrated everything encrypted with AES-256 + RSA-4096. Each compromise yielded credentials that unlocked the next target. Endor Labs assesses the campaign is "almost certainly not over."

🔗 Wiz · Socket · Endor Labs · Latio Tech

OpenAI Dropped SAST from Codex Security

OpenAI explained why Codex Security deliberately doesn't include a SAST report. Their argument: SAST tracks dataflow but can't tell you whether a security check actually works. "There's a big difference between 'the code calls a sanitizer' and 'the system is safe.'" Instead, Codex starts with the repo itself (architecture, trust boundaries, intended behavior) generates micro-fuzzers, uses z3-solver for constraint reasoning, and runs sandboxed PoC exploits to separate real from theoretical vulns. Across 1.2M commits, they found 10,561 high-severity issues with a claimed 50% false-positive reduction.

The framing matters more than the product. The biggest AI company in the world just said "we looked at SAST and decided it wasn't the right starting point."

🔗 OpenAI

AI Just Beat 99% of Human Hackers in CTF Competitions. For $5,000

Cyber startup Tenzai entered customized OpenAI and Anthropic models into six elite CTF competitions and placed top 1% out of ~125,000 humans. Total cost: $5,000. Separately, Claude Opus 4.6 found 22 Firefox vulnerabilities and produced 2 working exploits for ~$4,000.

AI-assisted offensive security is a production capability. As Tenzai's cofounder put it: "This is rapidly getting out of the realm of nations and military intelligence organizations." The cost curve is going in one direction, and it should inform how we think about defense.

🔗 Forbes · tl;dr sec #319

The RSAC Vendor Floor: Land Grabs, MCP Demands, and a $15B Quarter

The Omdia Cybersecurity Titans Index posted another 12.2% quarter: 20 tracked vendors hit $15.19B in combined revenue. Palo Alto dropped ~$400M on Koi Security for agentic AI protection. Check Point grabbed three Israeli startups for ~$150M. The land grab is real.

But the more interesting signal came from BSidesSF: security leaders said they won't consider new vendors without MCP capabilities, and build-vs-buy is getting muddier as LLMs lower the barrier to building your own security tooling. Andy Ellis examined all 607 RSAC exhibitors and found the "AI security" label so overloaded it's essentially meaningless for buyer comparison.

The Old AppSec Categories Are Breaking, and Nobody's Waiting for Gartner to Catch Up

Frank Wang and Cybersecurity Pulse both landed on the same conclusion from different angles this week. Wang argues the traditional analyst frameworks (Magic Quadrants, Forrester Waves) are obsolete because companies now exist on "a wildly diverse spectrum of AI literacy." He's seeing orgs where 95-100% of code is AI-generated next to shops under 10%. Averaging those realities produces guidance useless to both. His take: practitioners need to define emerging categories in real-time. He's starting with "AI-enabled product security", companies like DevArmor applying AI to threat modeling and design reviews.

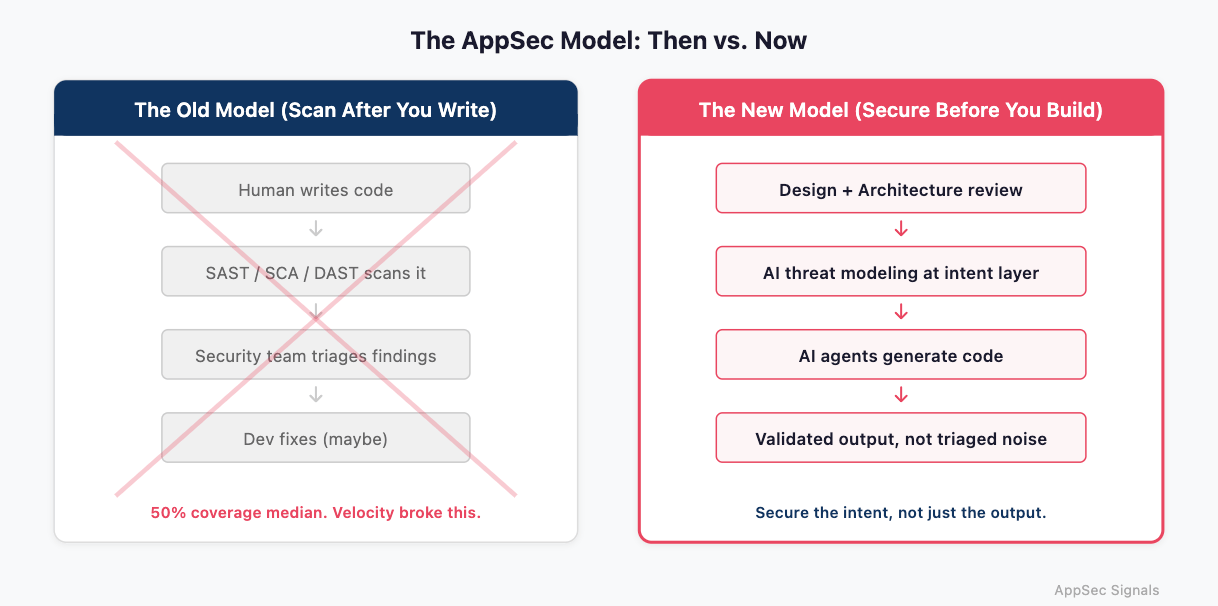

Cybersecurity Pulse names the root cause: the scan-after-you-write model was already struggling, and AI-generated code broke it completely. When velocity triples but your security checkpoint stays fixed, the coverage math falls apart. The fix isn't scanning faster, but to shift security to the design phase, before code exists.

What I Actually Think About the Post-RSAC World

The AppSec industry just went through its "before and after" moment. We walked into RSAC with a model that assumed human developers write code at human speed and security teams scan it downstream. Every one of those assumptions is now wrong.

AI coding agents have decoupled implementation speed from human capacity. That's a structural break. The entire "shift left to the code" philosophy becomes a rearguard action. You're scanning the output of a firehose and calling it a security program.

And look at the offensive side. Tenzai's AI placed top 1% in CTFs for the price of a nice dinner. Claude found real Firefox exploits for $4,000. TeamPCP hit five package ecosystems in 30 days. The attack surface is expanding at machine speed and the defenders are still arguing about SAST vs. DAST.

Security has to move upstream of code. Way upstream. Into design, architecture, the decisions that create risk before a single line gets generated. That's what we're building at DevArmor. I'm biased, but the evidence is getting hard to ignore. The companies that figure out how to secure the intent rather than the output are the ones that will matter in two years.

Until Next Week

RSAC hangover is real. If we connected at the conference and I haven't gotten back to you yet, I will. Probably.

What's your take on the post-RSAC landscape? Hit reply and tell me what you're seeing. I read every one.

— Amir

Thanks for reading The AppSec Signal, DevArmor’s newsletter for security professionals.

Have feedback or ideas for what we should cover next?

Feel free to reach out - [email protected]