Can butterflies be too dangerous to touch?

Anthropic seems to think so. Project Glasswing is the first time a frontier lab has stood up a $100M coalition specifically to handle the downstream security consequences of its own model, and deliberately withheld broader access to the underlying capability. It landed in the same week that Chris Hughes published the clearest dataset I've seen on how fast the defender side is falling behind, a16z released real numbers on AI agents and supply chain risk, and the NYT admitted the part every engineering leader has been quietly drafting in their head: we got very good at producing code and we haven't figured out how to review it.

A few assumptions that quietly held AppSec together for a decade are starting to give this week. It's worth being honest about which ones.

This Week's Signals

Project Glasswing: Anthropic Gated Its Own Frontier Model

Anthropic launched Project Glasswing, a $100M coalition with Amazon, Apple, Broadcom, Cisco, CrowdStrike, the Linux Foundation, Microsoft, and Palo Alto Networks, anchored on a new frontier model called Claude Mythos Preview. Mythos has already surfaced tens of thousands of bugs across major OSes and browsers, and Anthropic is deliberately restricting broad access because the offensive potential is too high. It's the first concrete example I've seen of capability-gated release applied to a frontier model in production.

Why this matters: If a model can outpace the entire defender ecosystem at finding bugs, the old "scan, ticket, patch" loop stops being the bottleneck. The interesting vendor question isn't whether your rule pack is good, it's whether your product can sit downstream of something like Mythos and still be useful.

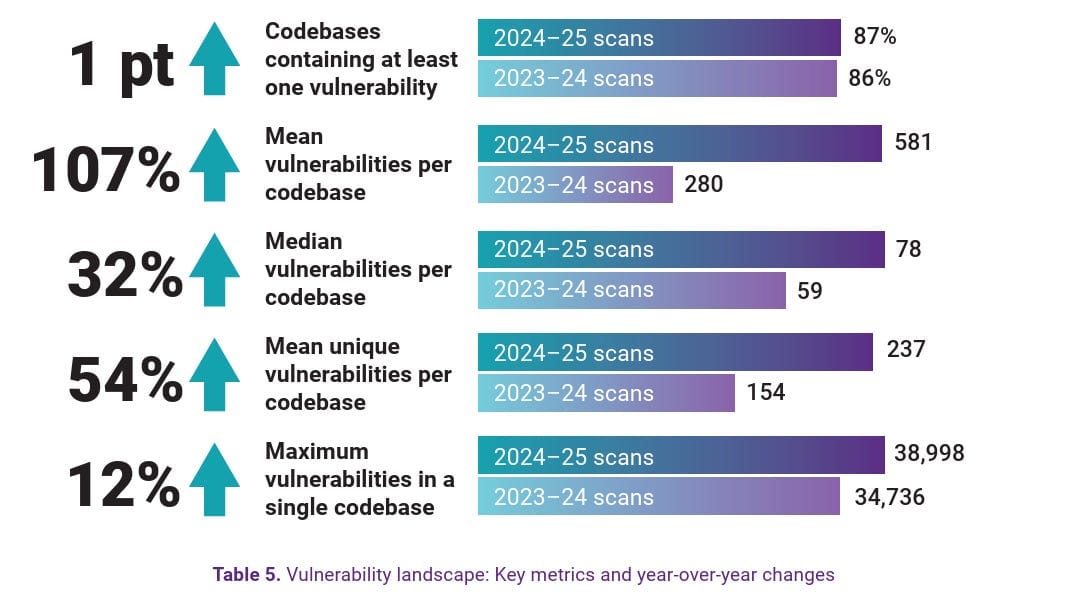

Vulnpocalypse: The Numbers Behind Why Glasswing Had to Happen

Chris Hughes' companion deep dive is the data case for why Glasswing exists. 48,000+ CVEs disclosed in 2024. 581 vulns per codebase on average (Synopsys OSSRA). XBOW reached #1 on HackerOne's US leaderboard in 2025. Defender MTTR sits at ~30.6 days; attacker MTTE is ~19.5 days (Mandiant M-Trends).

The gap worth sitting with: MTTE (19.5) vs. MTTR (30.6) isn't a triage problem you fix with better tickets. It's structural. Any strategy that pegs success to "reducing CVE count" is measuring effort on the wrong side of that gap.

Et Tu, Agent? The Supply Chain Study Worth Reading Twice

a16z analyzed 117,000 real dependency changes and found AI coding agents picked known-vulnerable versions about 50% more often than humans, with fixes that were harder to land (36.8% required major-version upgrades vs. 12.9% for human-authored changes).

Net effect across the dataset: agent-driven work produced a net vulnerability increase of 98, while human-authored work produced a net reduction of 1,316. Adjacent finding: ~20% of AI-recommended packages don't exist on the registry, and 43% of hallucinated names repeat across queries, which is what makes slopsquatting a real attack rather than a theoretical one.

What to do with this: If your platform lets agents auto-merge dependency PRs without a policy gate, the a16z numbers say you're increasing risk on average. Add a policy layer between the agent and the merge, and measure the delta.

WhatsApp's TEE Audit: What Secure-by-Design Looks Like in Practice

Trail of Bits audited WhatsApp's Private Inference, the system that runs AI features on encrypted messages without Meta seeing the plaintext. Even with one of the more ambitious secure-by-design efforts shipping, they found 28 issues including 8 high-severity: config files loading after attestation, ACPI tables excluded from measured boot, firmware self-reporting its own patch level. Meta fixed all of them before publication.

Why I'm flagging it: 28 issues found in design-and-audit and fixed before users touch the product is a completely different posture than 581 per codebase chased through a ticket queue after the fact. Not a silver bullet, but much cheaper unit economics per bug caught.

"We found 28 issues across the stack, including 8 high-severity vulnerabilities. Every single finding came from the gap between 'the architecture is sound' and 'the implementation handles every edge case.' Meta fixed all of them."

What NYT Revealed about the New SDLC Bottleneck

The NYT ran the piece most engineering leaders have been quietly rehearsing. Meta CTO Andrew Bosworth is quoted saying projects that used to need hundreds of engineers now need tens, and months compress into days. The harder problem the article surfaces, and doesn't fully answer, is that human review capacity hasn't scaled with generation capacity. Cursor bought Graphite. Anthropic and OpenAI have both shipped review agents. None of them have demonstrably closed the loop yet.

Here's what I think: The security-relevant question isn't "is the diff correct?" It's "does anyone still understand the architecture this code is landing in?" That's a threat modeling problem, not a review problem, and it's the part that gets skipped when speed goes up.

My Take: Scanner-Centric AppSec Is Running Out of Runway

The model most enterprise AppSec programs run on in 2026 is built on four assumptions: vulnerabilities are discovered at a manageable rate, disclosed responsibly, patched within a reasonable window, and the population is small enough for humans to triage against a Jira queue. This week's stories make it hard to keep all four standing.

The discovery rate has moved by at least an order of magnitude (Vulnpocalypse). The timing math is upside down: Mandiant's MTTE of ~19.5 days vs. defender MTTR of ~30.6 days means even a perfectly executed patch program is, on average, late. The tooling we're rolling out to go faster is tilting the dependency graph in the wrong direction (a16z). And the open source ecosystem carrying most of this code is visibly under-resourced.

Scanners don't go away. SAST, SCA, secrets detection, and runtime monitoring still do useful work. But they stop being the center of the program. The center moves to design-time decisions: threat modeling before the code exists, explicit trust boundaries, dependency policies enforced at the IDE and agent layer, bug-class elimination rather than bug-instance counting. WhatsApp's TEE audit is the current best example, and even there 28 findings landed, so this isn't a silver bullet. It's just better unit economics on every bug you do catch.

My prediction, and I'll happily be wrong about it in public: by the end of 2027, "vulnerability management" starts to feel about as dated as "antivirus." The teams that do well will have quietly moved their center of gravity toward exposure management, secure code generation guardrails, threat-model-as-code, and architectural risk engineering. The ones still measuring success by CVE counts in Jira are going to have a harder time explaining the story than they think.

If you disagree, especially on "scanners stop being the center," I'd like to hear the counter-argument. Reply to this email, or push back in person at VulnCon.

Scanners don't go away. SAST, SCA, secrets detection, and runtime monitoring still do useful work. But they stop being the center of the program. The center moves to design-time decisions: threat modeling before the code exists, explicit trust boundaries, dependency policies enforced at the IDE and agent layer, bug-class elimination rather than bug-instance counting.

Until Next Week

One question I'd like your answer to: what's one assumption your AppSec program still runs on that you think is quietly becoming invalid? Reply to this email. I read everything that comes in, and the best answers tend to shape what ends up in the next issue.

-Amir

Thanks for reading The AppSec Signal, DevArmor’s newsletter for security professionals.

Have feedback or ideas for what we should cover next?

Feel free to reach out - [email protected]