When the platform you build on is the vulnerability

The platforms people trust to build on are themselves getting breached. That changes things.

I spent a lot of the past two weeks reading incident reports from vibe coding platforms and thinking about what they mean for the rest of us. Not in the abstract "AI code is risky" sense. In the concrete "a $6.6 billion platform left every user's source code accessible for 48 days and closed the bug report" sense. Meanwhile, MOVEit is back with another CVSS 9.8, OWASP published its first quarterly catalog of real AI exploits, and Wiz announced it is embedding security scanning directly inside Lovable. The throughline: the infrastructure layer that AI-generated code runs on is now itself the attack surface.

This Week's Signals

Lovable Left Every Project Exposed for 48 Days

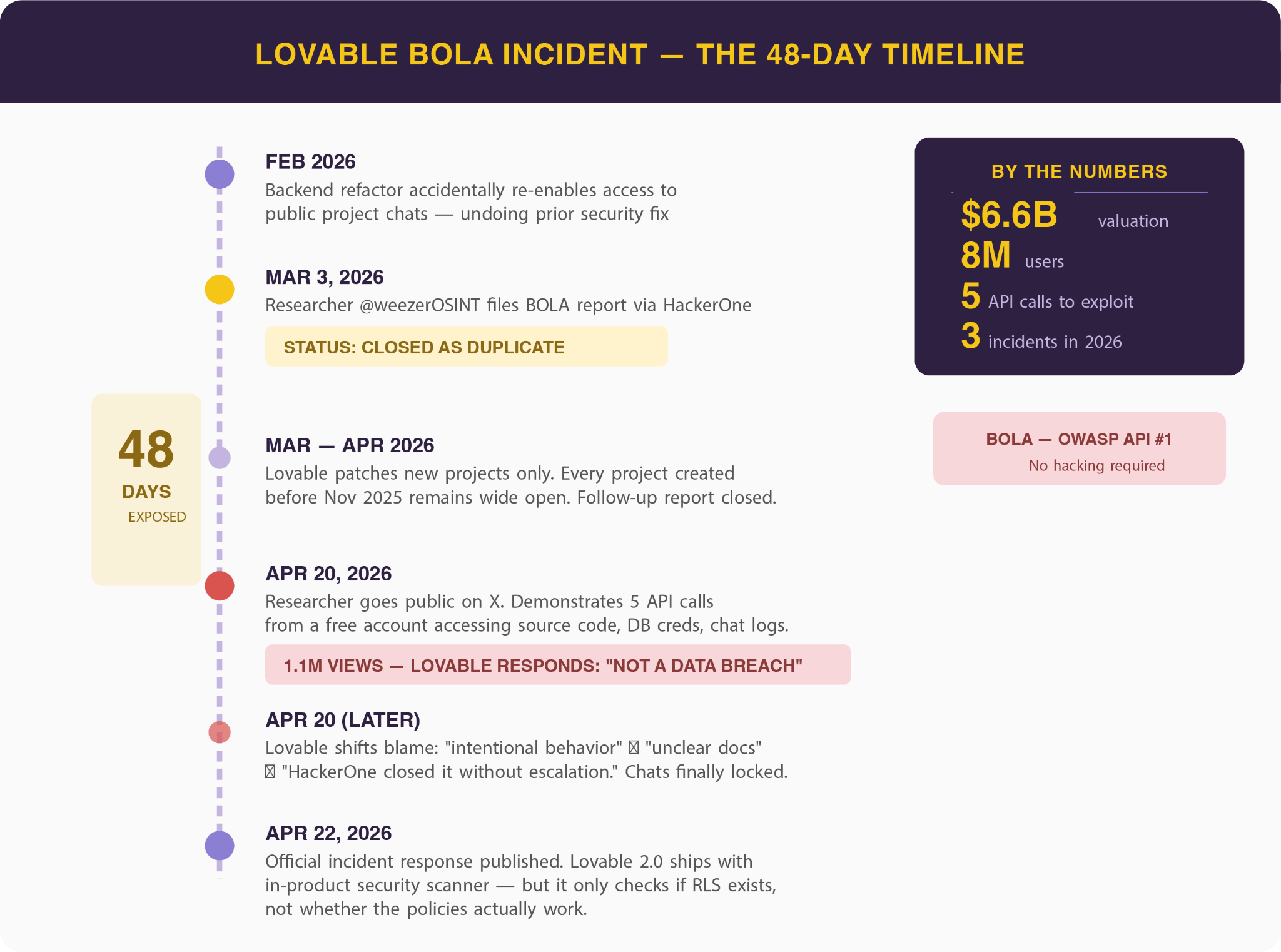

Lovable, the $6.6 billion vibe coding platform with eight million users, had a BOLA vulnerability that exposed source code, database credentials, and AI chat histories for every project created before November 2025. A researcher made five API calls from a free account and accessed another user's profile, projects, and source code. The vulnerability stayed open for 48 days after Lovable closed the initial bug bounty report without escalation. This was the third documented security incident for the platform in 2026 alone, following a February disclosure where 16 vulnerabilities (six critical) leaked data from 18,000 users.

Why this matters: Lovable isn't some fringe tool. Eight million users are building production apps on it. When the platform itself has basic access control flaws, every app built on it inherits that risk, and the developers using it have zero visibility into the problem.

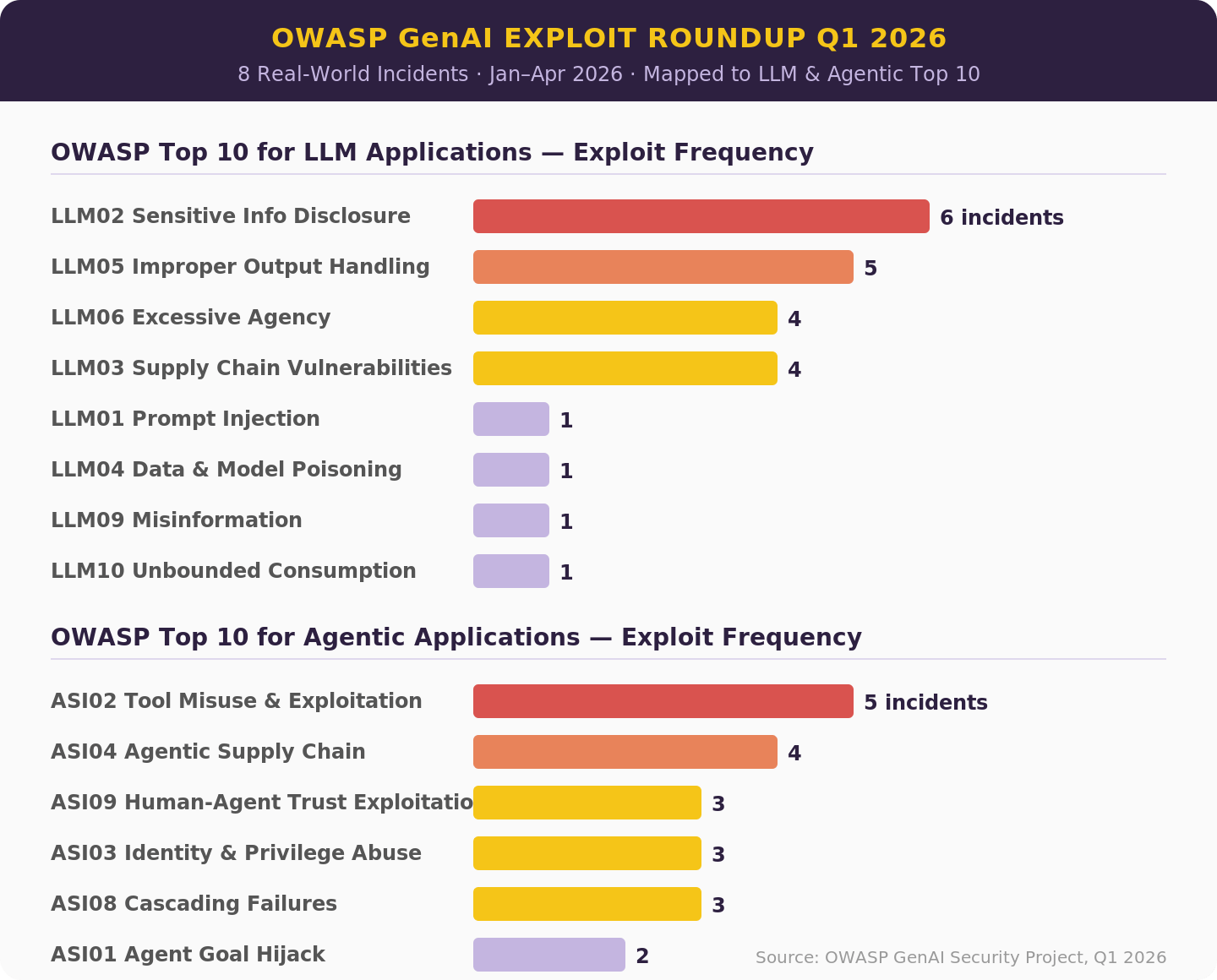

OWASP Published Its First GenAI Exploit Roundup

OWASP's GenAI Security Project released a Q1 2026 report cataloging real AI-related security incidents and exploit disclosures from January through April. The report maps each incident to the OWASP Top 10 for LLM Applications and the new Top 10 for Agentic Applications. The most important finding: most AI security events don't map to traditional CVE identifiers at all. Attackers are targeting agent identities, orchestration layers, and supply chains rather than discrete code flaws, and our vulnerability management systems weren't built for that.

Practical takeaway: If your AppSec program measures coverage by CVE tracking, you have a blind spot for the fastest-growing attack category. Read this report and ask whether your team has visibility into the architectural risks it catalogs.

Wiz Embeds Security Scanning Inside Lovable

Wiz announced an integration, generally available this month, that runs Wiz security scanning directly inside the Lovable platform. Vulnerabilities, secrets, and misconfigurations surface in Lovable's built-in security view where developers are already building. Separately, Wiz published research showing security risks in 20% of vibe-coded apps they analyzed. The timing is not accidental: this ships weeks after Lovable's most public security crisis.

What to watch: This is the first major security vendor embedding directly into a vibe coding platform rather than scanning from the outside. If it works, expect every vibe coding tool to ship with an embedded security layer by Q4. If it doesn't (because the fundamental architecture of these platforms makes post-hoc scanning insufficient), we'll know that too.

🔗 Wiz Blog

Exploits Now Routinely Arrive Before Patches

The Hacker News published a deep analysis labeling 2026 "The Year of AI-Assisted Attacks." The headline number: 28.3% of CVEs are now exploited within 24 hours of disclosure. The average time to remediate a known high or critical CVE is 74 days. That's a 73-day gap between exploitation and fix for nearly a third of critical vulnerabilities. AI-generated malware is slipping past static analysis and signature scanners because it looks like real software, not malware.

My take: The 74-day MTTR against a 24-hour exploit window isn't a staffing problem. It's an architecture problem. No amount of hiring closes a 73-day gap. The teams that survive this will be the ones who moved critical security decisions upstream, into design and architecture, before the code exists to exploit.

Here is what I think:

THE PLATFORM LAYER IS THE NEW ATTACK SURFACE

For the past few years, the security conversation about AI-generated code has focused on the code itself. Does it have bugs? Does it ship with hardcoded credentials? Does it introduce architectural weaknesses? Those are real problems. But the Lovable incidents point to something different and, I think, more consequential. The platforms that millions of people use to generate and deploy that code are themselves full of basic security flaws. The risk isn't just in what the AI writes. It's in what the AI runs on.

The numbers tell the story. Lovable: three incidents in four months, 48 days of exposure, a closed bug bounty report, 18,000 users' data leaked in a separate incident. In a single week in April, Lovable, Vercel (through Context.ai), and Bitwarden's CLI all had security failures. Wiz's research found risks in 20% of vibe-coded apps. OWASP's Q1 roundup confirmed that attackers are targeting orchestration layers and agent identities, not just model outputs. The pattern is consistent: the infrastructure that AI code depends on is less mature than the code it produces.

This matters because most security programs are not set up to audit platform risk. You can scan the code your team writes. You can review the dependencies they pull. But when the platform that generates, hosts, and deploys the code has a BOLA vulnerability that exposes every project for 48 days, your team's secure coding practices are irrelevant. The security boundary has moved, and it now includes the build platform itself. That means vendor security assessments for vibe coding tools need to be as rigorous as what you'd run on a cloud provider.

I don't think we're going to stop using these platforms. The productivity gains are too real. But the security evaluation has to catch up. If you're running production workloads on a vibe coding platform today, what does your vendor risk assessment for that platform look like? How do you factor the platform risk into your threat model? If you have opinions on this, I’d love to chat.

See you at FwdCloudSec?

One more thing before I let you go. DevArmor is sponsoring FwdCloudSec in Bellevue on June 1st and 2nd, and we're co-hosting a happy hour on June 1st with the folks at C1. If you're going to be in the area, whether you're attending the conference or just happen to be in the Pacific Northwest, come grab a drink and talk shop. No slides, no pitches, just good conversation with people who care about this stuff. Here is the registration link, would love to see some of you there.

-Amir

Thanks for reading The AppSec Signal, DevArmor’s newsletter for security professionals.

Have feedback or ideas for what we should cover next?

Feel free to reach out - [email protected]